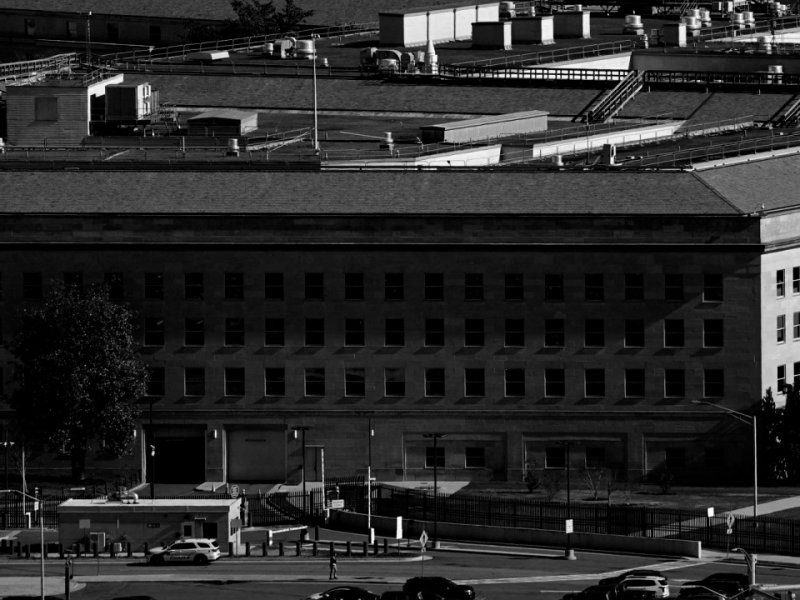

The U.S. Department of Defense has designated Anthropic as a supply chain risk to national security, triggering widespread concern across the technology sector. The decision followed a breakdown in contract negotiations between the Pentagon and the AI firm, particularly over restrictions tied to its flagship model Claude. Defense officials argued that limitations on how the military could use the technology posed operational risks, while the designation initially raised fears that companies working with the government would be forced to cut ties with Anthropic.

Pentagon Labels Anthropic a Supply Chain Risk Amid AI Policy Dispute

Anthropic CEO Dario Amodei pushed back, clarifying that the designation applies only to the use of Claude within specific defense-related contracts and does not prohibit broader commercial partnerships. Major tech firms including Microsoft, Google, and Amazon confirmed that Anthropic’s tools will remain available for non-military applications. The dispute centers on ethical concerns raised by Anthropic, including opposition to autonomous weapons and mass surveillance, which the Pentagon declined to accept as contractual limits.

Summary

The conflict between the Pentagon and Anthropic highlights growing tensions over the role of artificial intelligence in defense. While the designation signals stricter scrutiny of AI providers, its limited scope means Anthropic’s broader commercial operations remain largely unaffected.

Comments (6)

Incredible breakthrough! If these efficiency numbers hold up at scale it could dramatically change the cost of running AI workloads.

AI ethics vs defense needs 🤔 tough balance

Anthropic stance on weapons is interesting 💭

Pentagon concerns highlight AI risks 🚨

Good to see limits on military AI use

Tech and security clash continues 🔍

Leave a Comment

Comments are reviewed before publishing.